A couple of weeks ago, the University of Sydney proudly announced that 31 of its subjects were ranked in the top 50 in the world. According to the QS 2020 Subject Rankings, USyd placed 4th in the world for sport and physical therapy, 13th for law and 1st in Australia (and in the top 20 globally) for performing arts.

University rankings are closely watched by the media and the tertiary education sector. They condense the vague notion of a university’s quality into a nice, neat, comparable number. And a good rank is an attractive selling point to employers, students and potential sources of research funding.

However, a closer look at leading university rankings reveals that they tend to ignore criteria which are most relevant and pressing to students, such as teaching quality, overall student experience and graduate outcomes.

The three most high-profile university rankings are the QS World University Rankings (QS), Times Higher Education World University Rankings (THE) and Academic Ranking of World Universities (ARWU). Each system also publishes separate lists for subject areas and geographical regions, as well as more tailored measures regarding, for example, graduate employability or young universities. Their methodologies suffer from two main misidentification problems: firstly, an unbalanced emphasis on research; and secondly, an over-reliance on subjective metrics.

40% of a university’s QS ranking is based on a university’s reputation among academics. Academics are surveyed annually on which institutions they believe represent the best in teaching and research in their field. THE, similarly, gives teaching and research surveys a combined weight of 34.5%. Granted, these surveys encompass a fair amount of academics across locations and fields, and prevent academics from voting for their own university. However, critics argue that QS and THE assign too much importance on an essentially subjective measure of sentiment within the academic community.

Recent citations, a proxy for an institution’s research impact, make up 30% of THE and 20% of QS rankings. QS also includes research income from grants and industry (8.5% in total), while ARWU evaluates how many highly-cited staff (20%) and papers published in high-profile journals Nature and Science (20%) a university has, in addition to general citations (20%). This is the starkest illustration of these rankings’ research-heavy focus.

Unfortunately for students, none of the main rankings involve student surveys. They include, as proxies for teaching, staff-to-student ratios, international staff and student numbers and staff with PhDs.

These flawed methodologies lead to four main problems for students. Firstly, they essentially ignore issues of student satisfaction with teaching and overall campus experience, and substitute easily ascertainable data on labour market outcomes of graduates – such as employment rates, income or promotions per profession or industry – with subjective employer surveys. QS’ Graduate Employability Rankings (under which USyd ranked 5th globally in 2019) consider employer surveys (30%), prevalence of high-profile alumni (25%), industry partnerships in placements and research (25%) and frequency of employer visits to universities, framed as “employer-student connections” (10%), with only 10% allocated to graduate employment rates. Not only is this an imprecise assessment of how well a particular degree prepares students for the real world, it indicates that the move towards “graduate employability” at universities is pushed by employers, not students, reflecting a growing and divisive trend of corporatisation at universities.

Secondly, subjective measures such as teaching reputation surveys, especially from the providers and not the recipients of such teaching, are likely biased towards larger and more established universities, as their work is more visible, meaning that smaller universities producing quality output can find it hard to get recognised. Citations – with all the vagaries and uncertainties of publishing in journals – can also be similarly biased. In particular, ARWU includes as criteria the number of alumni (10%) and staff (20%) with Nobel Prizes or Fields Medals, resulting in a ranking which focuses on prestige at the top of the bell curve and which does not necessarily translate into positive results for the vast majority of students who do not receive instruction or supervision from these laureates.

Thirdly, even if rankings are explicitly research-focused, the problem arises when they are so high-profile and used simplistically by media and universities alike to compare institutions and, critically, attract students. Most students at most universities are undergraduate coursework students, for whom the research function of their university is largely unseen and bears little personal relevance to the content they learn, the skills they acquire and the quality of education they receive. For a recent high-school leaver tossing up their options, these rankings are ill-suited to judge whether their future course – the educational environment and the concrete value of the skills and qualifications gained – is one of the best in the world. And finally, focusing too intently on rankings runs the danger of universities substantially shifting out resources towards research, awards and income-generation, which is acceptable in itself, though not at the expense of teaching.

So how can we fully capture students’ experiences or improve existing methodologies? The most obvious answer is to include concrete graduate outcomes and direct surveys of the student body, weighting these components heavily. Student surveys will still be subjective to an extent, but considering the large sample size and the critical financial stake students have in universities, they must be taken seriously to encourage universities to invest in quality teaching, facilities and resources, and make policy decisions in the best interests of students.

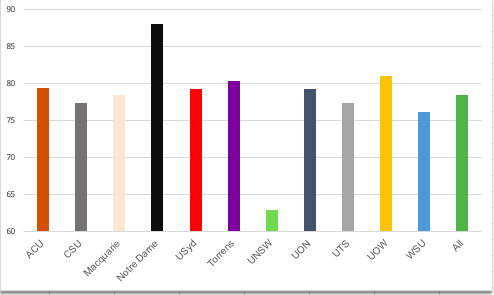

The Commonwealth Government recently released the results of the Student Experience Survey for 2019, revealing that USyd students were the second-least satisfied in Australia (behind UNSW, largely due to their unpopular trimester system). And rankings such as the THE University Impact Rankings, which measure universities’ research priorities and impact against the UN Sustainable Development Goals, might more accurately reflect a university’s contribution to social progress and global issues that students care about.

The headline numbers tell a different story to what is happening on the ground, in classrooms and on campus, leaving student voices ignored and unaccounted for. Ultimately, regardless of whether a university is research- or teaching-focused, students are the main source of revenue for the sector – and are impacted the hardest by ill-thought-out decisions. They should be front and centre in this public forum.